K8S 搭建指南

第一部分 前置操作

设置静态IP地址

使用mutui的方法重置网络配置

1 2 3 4 5 6 7 8 9 10 11 方法三:使用 nmtui 图形化工具 (最简单) 在终端输入:nmtui 选择 "Edit a connection" 。 选择你的网卡并按 Enter。 将 IPv4 CONFIGURATION 从 <Automatic> 改为 <Manual>。 点击 <Show> 展开,填写 Addresses (如 192.168.1.100/24)、Gateway 和 DNS servers。 一路点击 OK 退出。 回到主菜单选择 "Activate a connection" ,对该网卡进行“禁用再启用”以生效。 意要删除uuid 不能有两个一样的uuid 目录为 RHEL 9 默认将网络配置存储在 /etc/NetworkManager/system-connections/ 目录下,文件格式 为 .nmconnection

设置主机名

1 sudo hostnamectl set-hostname "k8snode1" && exec bash

添加hosts

1 2 3 4 192.168.3.113 k8snode1 192.168.3.114 k8snode2 192.168.3.115 k8snode3

永久关闭 Swap

1 2 3 4 5 6 7 free -h sudo sed -i '/swap/d' /etc/fstabsudo swapoff -a

设置 SELinux 为宽容模式

1 2 3 4 sudo setenforce 0sudo sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/config/selinux

停止防火墙 (内网环境建议直接关闭,生产环境需按需开放端口)

1 2 sudo systemctl stop firewalldsudo systemctl disable firewalld

开放防火墙的配置

6443/tcp :Kubernetes API 服务器

2379–2380/tcp :etcd 服务器(用于存储集群数据)

10250–10252/tcp :kubelet API 和控制平面服务

10257–10259/tcp :调度器和控制器管理器

179/tcp :BGP(用于网络插件,如果使用)

4789/udp :VXLAN(用于 pod 网络,如果使用覆盖网络)

1 2 3 4 5 6 7 8 $ sudo firewall-cmd --permanent --add-port={6443,2379,2380,10250,10251,10252,10257,10259,179}/tcp $ sudo firewall-cmd --permanent --add-port=4789/udp $ sudo firewall-cmd --reload $ sudo firewall-cmd --permanent --add-port={179,10250,30000-32767}/tcp $ sudo firewall-cmd --permanent --add-port=4789/udp $ sudo firewall-cmd --reload

加载内核模块

添加内核模块和参数对于 Kubernetes 管理跨节点的网络流量和容器通信至关重要。overlay 模块支持分层文件系统,从而实现容器内的高效存储;而 br_netfilter 则允许在桥接接口之间进行网络过滤。这些模块确保了 Pod 间网络连接的正常进行,并符合 Kubernetes 的网络策略,从而避免性能问题并增强功能。

1 2 3 4 5 6 7 8 9 10 在 Kubernetes 中,Pod 通常运行在虚拟网络中(比如 10.244.x.x)。当 Node A 上的 Pod 想要访问 Node B 上的 Pod 时,数据包必须先经过 Node A 的物理网卡,再通过宿主机的网络层转发出去。 如果 net.ipv4.ip_forward 未开启,Node A 的 Linux 内核在发现数据包的目标 IP 不是自己的物理 IP 时,会立即拦截并丢弃,导致 Pod 之间无法联网。 桥接网络与防火墙 (Bridge-netfilter) K8S 常用网桥(Bridge)来连接容器网卡。 br_netfilter 模块的作用是让网桥模式下的数据包也能经过 iptables(防火墙规则)的处理。 Kubernetes 的 Service (ClusterIP) 机制高度依赖 iptables 或 ipvs 进行负载均衡。如果不开启这个转发模块,网桥上的流量就会绕过安全检查和路由规则,导致 Service 无法访问。

net.bridge.bridge-nf-call-iptables = 1 允许桥接流量通过 iptables,这对于节点和 pod 之间的路由至关重要。

net.ipv4.ip_forward = 1 允许跨节点接口进行 pod 到 pod 通信的 IP 转发。

net.bridge.bridge-nf-call-ip6tables = 1 通过 ip6tables 管理桥接接口上的 IPv6 流量,这对于 IPv6 网络环境非常重要。

1 2 3 4 5 6 7 cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf net.bridge.bridge-nf-call-iptables = 1 net.bridge.bridge-nf-call-ip6tables = 1 net.ipv4.ip_forward = 1 EOF sudo sysctl --system

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 cat <<EOF | sudo tee /etc/modules-load.d/k8s.conf overlay br_netfilter ip_vs ip_vs_rr ip_vs_wrr ip_vs_sh nf_conntrack EOF modprobe -- br_netfilter modprobe -- ip_vs modprobe -- ip_vs_rr modprobe -- ip_vs_wrr modprobe -- ip_vs_sh modprobe -- nf_conntrack lsmod | grep -e br_netfilter -e ip_vs cat <<EOF | sudo tee /etc/sysctl.d/k8s.conf net.bridge.bridge-nf-call-iptables = 1 net.bridge.bridge-nf-call-ip6tables = 1 net.ipv4.ip_forward = 1 EOF sysctl --system * soft nofile 65536 * hard nofile 65536 * soft nproc 65536 * hard nproc 65536

dnf 安装 containerd

1 2 3 4 5 6 7 8 9 10 11 sudo dnf config-manager --add-repo https://download.docker.com/linux/centos/docker-ce.reposudo dnf install -y containerd.iocontainerd config default | sudo tee /etc/containerd/config.toml > /dev/null sudo sed -i 's/SystemdCgroup = false/SystemdCgroup = true/' /etc/containerd/config.toml[plugins.'io.containerd.cri.v1.runtime' .containerd.runtimes.runc.options] SystemdCgroup = true

查看 containerd 配置的 sandbox image

1 2 3 4 grep -i sandbox /etc/containerd/config.toml [plugins.'io.containerd.cri.v1.images' ] sandbox_image = "registry.k8s.io/pause:3.10.1"

containerd 源目录

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 grep -A5 'config_path' /etc/containerd/config.toml [plugins.'io.containerd.cri.v1.images' .registry] config_path = "/etc/containerd/certs.d" mkdir -p /etc/containerd/certs.d/registry.k8s.iomkdir -p /etc/containerd/certs.d/k8s.gcr.iomkdir -p /etc/containerd/certs.d/docker.iocat > /etc/containerd/certs.d/registry.k8s.io/hosts.toml << 'EOF' server = "https://registry.k8s.io" [host."https://m.daocloud.io/registry.k8s.io" ] capabilities = ["pull" , "resolve" ] [host."https://k8s.m.daocloud.io" ] capabilities = ["pull" , "resolve" ] EOF cat > /etc/containerd/certs.d/docker.io/hosts.toml << 'EOF' server = "https://docker.io" [host."https://mirror.baidubce.com" ] capabilities = ["pull" , "resolve" ] [host."https://docker.m.daocloud.io" ] capabilities = ["pull" , "resolve" ] [host."https://dockerproxy.com" ] capabilities = ["pull" , "resolve" ] EOF cat > /etc/containerd/certs.d/k8s.gcr.io/hosts.toml << 'EOF' server = "https://k8s.gcr.io" [host."https://registry.aliyuncs.com/google_containers" ] capabilities = ["pull" , "resolve" ] EOF sudo systemctl restart containerdsudo systemctl enable containerdsudo journalctl -u containerd -f

安装 kubernetes.repo

如 Kubeadm、kubectl 和 kubelet)在 Rocky Linux 9 或 AlmaLinux 9 的默认软件包存储库中不可用。因此,要安装这些工具,请在所有节点上添加以下存储库。

1 2 3 4 5 6 7 8 cat <<EOF | sudo tee /etc/yum.repos.d/kubernetes.repo [kubernetes] name=Kubernetes baseurl=https://pkgs.k8s.io/core:/stable:/v1.29/rpm/ enabled=1 gpgcheck=1 gpgkey=https://pkgs.k8s.io/core:/stable:/v1.29/rpm/repodata/repomd.xml.key EOF

取消代理

1 2 3 unset http_proxy https_proxy HTTP_PROXY HTTPS_PROXYexport no_proxy="127.0.0.1,localhost,10.0.0.0/8,192.168.0.0/16"

第二部分,安装K8s

使用ADM安装

安装 kubelet kubeadm kubectl

1 2 sudo dnf install -y kubelet kubeadm kubectl --disableexcludes=kubernetes

k8s pull 一下镜像

1 2 3 4 kubeadm config images pull kubeadm config images list crictl pull registry.k8s.io/pause:3.10.1 s

初始化平面

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 kubeadm init --control-plane-endpoint=k8snode1 --pod-network-cidr=10.244.0.0/16 --v=5 Your Kubernetes control-plane has initialized successfully! To start using your cluster, you need to run the following as a regular user: mkdir -p $HOME /.kube sudo cp -i /etc/kubernetes/admin.conf $HOME /.kube/config sudo chown $(id -u):$(id -g) $HOME /.kube/config Alternatively, if you are the root user, you can run: export KUBECONFIG=/etc/kubernetes/admin.conf You should now deploy a pod network to the cluster. Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at: https://kubernetes.io/docs/concepts/cluster-administration/addons/ You can now join any number of control-plane nodes by copying certificate authorities and service account keys on each node and then running the following as root: kubeadm join k8snode1:6443 --token g0i3rg.mp1gc1dw1fmtqcib \ --discovery-token-ca-cert-hash sha256:1d1c772ec0486825e21c26dafca5eb139570af3caf062dcee25e9decebf161c2 \ --control-plane Then you can join any number of worker nodes by running the following on each as root: kubeadm join k8snode1:6443 --token g0i3rg.mp1gc1dw1fmtqcib \ --discovery-token-ca-cert-hash sha256:1d1c772ec0486825e21c26dafca5eb139570af3caf062dcee25e9decebf161c2

配置kube config

1 2 3 4 $ mkdir -p $HOME /.kube $ sudo cp -i /etc/kubernetes/admin.conf $HOME /.kube/config $ sudo chown $(id -u):$(id -g) $HOME /.kube/config

安装CNI网络插件

1 2 3 ls /etc/cni/net.d/ kubectl apply -f "https://docs.projectcalico.org/manifests/calico.yaml"

检查安装

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 kubectl get pods -n kube-system kubectl get nodes kubectl get componentstatuses systemctl status kubelet systemctl status containerd kubectl get nodes -o wide kubectl get pods -n kube-system -o wide kubectl get pods -n kube-flannel kubectl describe node k8snode1 | tail -20 crictl ps -a | grep apiserver systemctl status kubelet journalctl -xeu kubelet --no-pager | tail -20 bashss -tlnp | grep 6443

K8s 二进制的安装

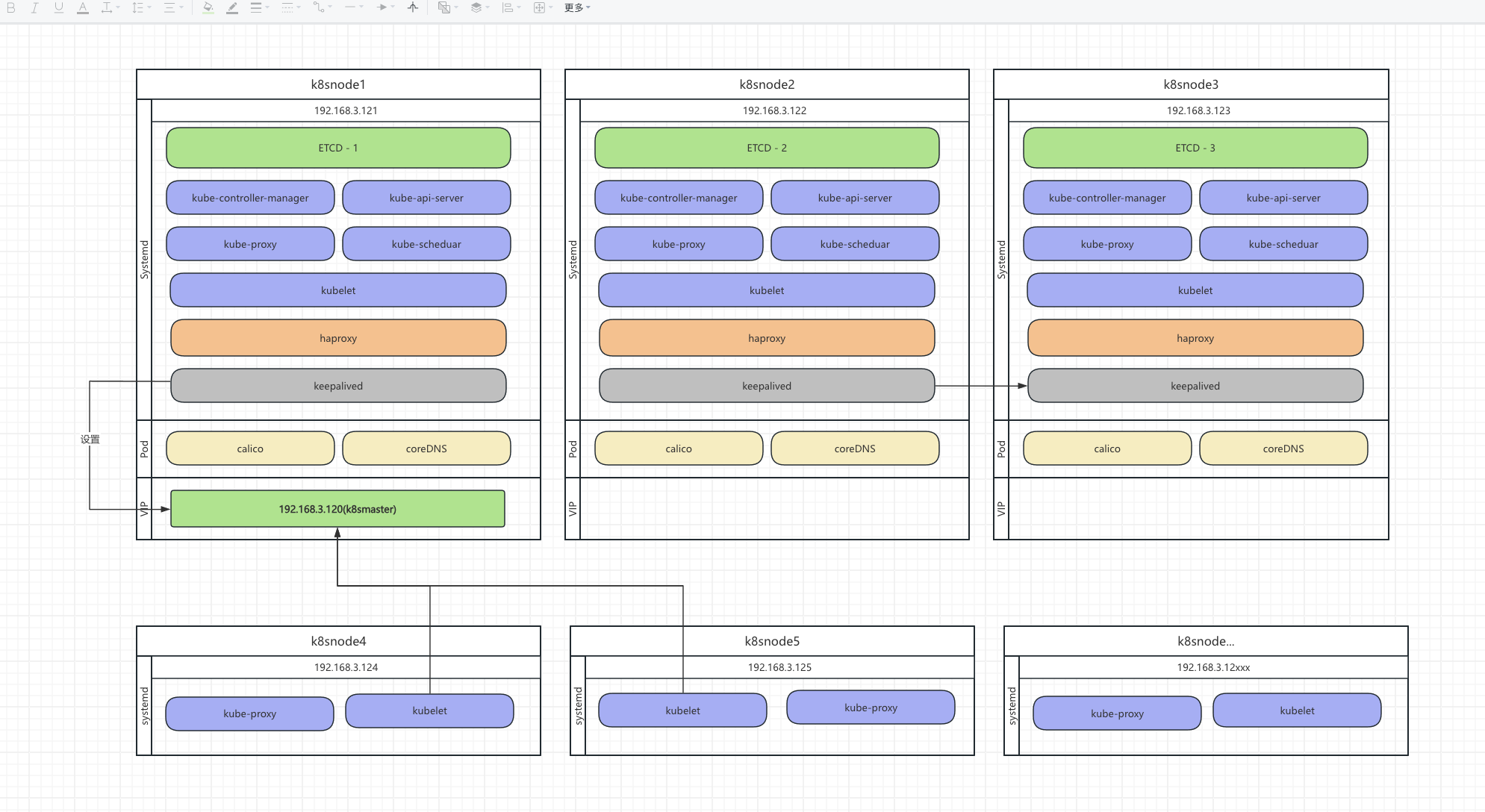

架构图如下

配置hosts文件

1 2 3 4 5 6 7 8 9 10 192.168.3.120 k8smaster (VIP) 192.168.3.121 k8snode1 k8smaster1 etcd1 192.168.3.122 k8snode2 k8smaster2 etcd2 192.168.3.123 k8snode3 k8smaster3 etcd3 192.168.3.124 k8snode4 k8worker1 192.168.3.125 k8snode5 k8worker2 192.168.3.126 k8snode6 k8worker3 192.168.3.127 k8snode7 k8worker4 ...

生成ca.key 和 ca.cert

1 2 3 4 5 6 openssl genrsa -out ca.key 2048 openssl req -x509 -new -nodes -key ca.key -subj "/C=CN/ST=Beijing/L=Beijing/O=k8s/OU=System/CN=kubernetes" -days 3650 -out ca.crt chmod 755 ./*

可以参考 https://kubernetes.io/zh-cn/docs/setup/best-practices/certificates/

生成etcd证书

生成etcd_ssl.cnf

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 [ req ] req_extensions = v3_req distinguished_name = req_distinguished_name [ req_distinguished_name ] [ v3_req ] basicConstraints = CA:FALSE keyUsage = nonRepudiation, digitalSignature, keyEncipherment subjectAltName = @alt_names [ alt_names ] IP.1 = 127.0.0.1 IP.2 = 192.168.3.121 IP.3 = 192.168.3.122 IP.4 = 192.168.3.123 DNS.1 = localhost DNS.2 = k8snode1 DNS.3 = k8smaster1 DNS.4 = etcd1 DNS.5 = k8snode2 DNS.6 = k8smaster2 DNS.7 = etcd2 DNS.8 = k8snode3 DNS.9 = k8smaster3 DNS.10 = etcd3

生成 etcd 服务端私钥

1 2 3 4 openssl genrsa -out etcd_server.key 2048

生成证书签名请求 (CSR)

1 2 3 4 5 6 7 8 9 10 openssl req -new -key etcd_server.key \ -config etcd_ssl.cnf \ -subj "/CN=etcd-server" \ -out etcd_server.csr

签发证书

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 openssl x509 -req -in etcd_server.csr \ -CA ca.crt -CAkey ca.key \ -CAcreateserial \ -days 36500 \ -extensions v3_req \ -extfile etcd_ssl.cnf \ -out etcd_server.crt

下载安装 etcd

下载

1 2 3 4 5 6 7 8 9 10 11 12 13 mkdir ~/etcd.downloadcd ~/etcd.downloadETCD_VER=v3.6.10 wget https://github.com/etcd-io/etcd/releases/download/${ETCD_VER} /etcd-${ETCD_VER} -linux-arm64.tar.gz tar xzvf etcd-${ETCD_VER} -linux-arm64.tar.gz cp ./etcd-${ETCD_VER} -linux-arm64/etcd/* /usr/local/bin/cp ./etcd-${ETCD_VER} -linux-arm64/etcdctl/* /usr/local/bin/etcd --version

分发证书

1 2 3 4 5 6 7 8 9 10 11 sudo mkdir -p /etc/etcd/datasudo mkdir -p /etc/etcd/sslscp ca.crt etcd_server.crt etcd_server.key root@k8snode2:/etc/etcd/ssl/ scp ca.crt etcd_server.crt etcd_server.key root@k8snode3:/etc/etcd/ssl/

创建配置文件

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 cat <<EOF | sudo tee /etc/etcd/etcd.conf # [Member] ETCD_NAME="etcd-1" ETCD_DATA_DIR="/etc/etcd/data" # 监听地址建议使用 IP 以防 DNS 解析服务未启动导致监听失败 ETCD_LISTEN_PEER_URLS="https://192.168.3.121:2380" ETCD_LISTEN_CLIENT_URLS="https://192.168.3.121:2379,https://127.0.0.1:2379" # [Clustering] # 广播地址使用 hosts 中的域名 ETCD_INITIAL_ADVERTISE_PEER_URLS="https://etcd1:2380" ETCD_ADVERTISE_CLIENT_URLS="https://etcd1:2379" ETCD_INITIAL_CLUSTER="etcd-1=https://etcd1:2380,etcd-2=https://etcd2:2380,etcd-3=https://etcd3:2380" ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_INITIAL_CLUSTER_STATE="new" # [Security] ETCD_CERT_FILE="/etc/etcd/ssl/etcd_server.crt" ETCD_KEY_FILE="/etc/etcd/ssl/etcd_server.key" ETCD_TRUSTED_CA_FILE="/etc/etcd/ssl/ca.crt" ETCD_CLIENT_CERT_AUTH="true" ETCD_PEER_CERT_FILE="/etc/etcd/ssl/etcd_server.crt" ETCD_PEER_KEY_FILE="/etc/etcd/ssl/etcd_server.key" ETCD_PEER_TRUSTED_CA_FILE="/etc/etcd/ssl/ca.crt" ETCD_PEER_CLIENT_CERT_AUTH="true" # [Logging] ETCD_LOGGER="zap" ETCD_LOG_OUTPUTS="stderr" EOF cat <<EOF | sudo tee /etc/etcd/etcd.conf # [Member] ETCD_NAME="etcd-2" ETCD_DATA_DIR="/etc/etcd/data" ETCD_LISTEN_PEER_URLS="https://192.168.3.122:2380" ETCD_LISTEN_CLIENT_URLS="https://192.168.3.122:2379,https://127.0.0.1:2379" # [Clustering] ETCD_INITIAL_ADVERTISE_PEER_URLS="https://etcd2:2380" ETCD_ADVERTISE_CLIENT_URLS="https://etcd2:2379" ETCD_INITIAL_CLUSTER="etcd-1=https://etcd1:2380,etcd-2=https://etcd2:2380,etcd-3=https://etcd3:2380" ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_INITIAL_CLUSTER_STATE="new" # [Security] ETCD_CERT_FILE="/etc/etcd/ssl/etcd_server.crt" ETCD_KEY_FILE="/etc/etcd/ssl/etcd_server.key" ETCD_TRUSTED_CA_FILE="/etc/etcd/ssl/ca.crt" ETCD_CLIENT_CERT_AUTH="true" ETCD_PEER_CERT_FILE="/etc/etcd/ssl/etcd_server.crt" ETCD_PEER_KEY_FILE="/etc/etcd/ssl/etcd_server.key" ETCD_PEER_TRUSTED_CA_FILE="/etc/etcd/ssl/ca.crt" ETCD_PEER_CLIENT_CERT_AUTH="true" # [Logging] ETCD_LOGGER="zap" ETCD_LOG_OUTPUTS="stderr" EOF cat <<EOF | sudo tee /etc/etcd/etcd.conf # [Member] ETCD_NAME="etcd-3" ETCD_DATA_DIR="/etc/etcd/data" ETCD_LISTEN_PEER_URLS="https://192.168.3.123:2380" ETCD_LISTEN_CLIENT_URLS="https://192.168.3.123:2379,https://127.0.0.1:2379" # [Clustering] ETCD_INITIAL_ADVERTISE_PEER_URLS="https://etcd3:2380" ETCD_ADVERTISE_CLIENT_URLS="https://etcd3:2379" ETCD_INITIAL_CLUSTER="etcd-1=https://etcd1:2380,etcd-2=https://etcd2:2380,etcd-3=https://etcd3:2380" ETCD_INITIAL_CLUSTER_TOKEN="etcd-cluster" ETCD_INITIAL_CLUSTER_STATE="new" # [Security] ETCD_CERT_FILE="/etc/etcd/ssl/etcd_server.crt" ETCD_KEY_FILE="/etc/etcd/ssl/etcd_server.key" ETCD_TRUSTED_CA_FILE="/etc/etcd/ssl/ca.crt" ETCD_CLIENT_CERT_AUTH="true" ETCD_PEER_CERT_FILE="/etc/etcd/ssl/etcd_server.crt" ETCD_PEER_KEY_FILE="/etc/etcd/ssl/etcd_server.key" ETCD_PEER_TRUSTED_CA_FILE="/etc/etcd/ssl/ca.crt" ETCD_PEER_CLIENT_CERT_AUTH="true" # [Logging] ETCD_LOGGER="zap" ETCD_LOG_OUTPUTS="stderr" EOF

安装服务(k8snode1-3三台节点同时进行)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 [Unit] Description=Etcd Server After=network.target After=network-online.target Wants=network-online.target [Service] Type=notify EnvironmentFile=/etc/etcd/etcd.conf ExecStart=/usr/local/bin/etcd Restart=on-failure LimitNOFILE=65536 [Install] WantedBy=multi-user.target

启动服务(k8snode1-3三台节点同时进行)

1 2 3 4 5 6 7 8 9 10 systemctl daemon-reload systemctl restart etcd systemctl enable etcd ETCDCTL_API=3 etcdctl --endpoints="https://k8snode1:2379,https://k8snode2:2379,https://k8snode3:2379" \ --cacert=/etc/etcd/ssl/ca.crt \ --cert=/etc/etcd/ssl/etcd_server.crt \ --key=/etc/etcd/ssl/etcd_server.key \ endpoint health -w table

搭建K8S API-server

下载最新的安装包(k8snode1-3)

1 2 3 4 mkdir -P /root/kube/cd /root/kube/wget https://dl.k8s.io/v1.36.0/kubernetes-server-linux-arm64.tar.gz tar -xzvf kubernetes-server-linux-arm64.tar.gz

制作安装和移除脚本

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 cat <<'EOF' > /root/kube/k8s_manager.shBIN_DIR="/root/kube/kubernetes/server/bin" TARGET_DIR="/usr/local/bin" K8S_BINS=(kube-apiserver kube-controller-manager kube-scheduler kubelet kube-proxy kubectl) install_bins echo "--- 正在开始安装核心组件 ---" for bin in "${K8S_BINS[@]} " ; do if [ -f "$BIN_DIR /$bin " ]; then cp "$BIN_DIR /$bin " "$TARGET_DIR /" chmod +x "$TARGET_DIR /$bin " echo "[成功] 已安装: $bin " else echo "[错误] 找不到源文件: $BIN_DIR /$bin " fi done echo "--- 安装完成 ---" } uninstall_bins echo "--- 正在开始删除核心组件 ---" for bin in "${K8S_BINS[@]} " ; do if [ -f "$TARGET_DIR /$bin " ]; then rm -f "$TARGET_DIR /$bin " echo "[成功] 已删除: $bin " else echo "[跳过] $TARGET_DIR /$bin 不存在" fi done read -p "是否同时清理 /etc/kubernetes 目录及运行数据? (y/n): " confirm if [[ "$confirm " == "y" ]]; then echo "正在清理配置与数据..." rm -rf /etc/kubernetes rm -rf /var/lib/kubelet rm -rf /var/log/kubernetes echo "[完成] 已清理相关目录" fi echo "--- 卸载完成 ---" } case "$1 " in install) install_bins ;; uninstall) uninstall_bins ;; *) echo "使用方法: $0 {install|uninstall}" exit 1 ;; esac EOF chmod +x /root/kube/k8s_manager.shsh /root/kube/k8s_manager.sh install

生成CA 证书 (k8snode1)

生成cnf文件 (k8snode1)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 [ req ] req_extensions = v3_req distinguished_name = req_distinguished_name [ req_distinguished_name ] [ v3_req ] basicConstraints = CA:FALSE keyUsage = nonRepudiation, digitalSignature, keyEncipherment subjectAltName = @alt_names [ alt_names ] DNS.1 = kubernetes DNS.2 = kubernetes.default DNS.3 = kubernetes.default.svc DNS.4 = kubernetes.default.svc.cluster.local DNS.5 = k8smaster DNS.6 = k8smaster1 DNS.7 = k8smaster2 DNS.8 = k8smaster3 IP.1 = 127.0.0.1 IP.2 = 192.168.3.120 IP.3 = 192.168.3.121 IP.4 = 192.168.3.122 IP.5 = 192.168.3.123 IP.6 = 10.96.0.1

签发证书 (k8snode1)

1 2 openssl genrsa -out kube-apiserver.key 2048

生成签名请求 (CSR)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 openssl req -new -key kube-apiserver.key \ -config kube-apiserver-ssl.cnf \ -subj "/CN=kubernetes" \ -out kube-apiserver.csr openssl x509 -req -in kube-apiserver.csr \ -CA ca.crt -CAkey ca.key \ -CAcreateserial \ -days 36500 \ -extensions v3_req \ -extfile kube-apiserver-ssl.cnf \ -out kube-apiserver.crt

生成topken (k8snode1)

vim /root/kube/gen_token.sh

1 2 3 4 5 6 7 8 TOKEN=$(openssl rand -hex 16) cat <<EOF > /etc/kubernetes/ssl/token.csv ${TOKEN},kubelet-bootstrap,10001,"system:bootstrappers" EOF chmod +x /root/kube/gen_token.shsh /root/kube/gen_token.sh

分发证书和Token (k8snode1)

cd /etc/kubernetes/ssl/

1 2 3 scp ca.crt ca.key kube-apiserver.crt kube-apiserver.key token.csv root@192.168.3.122:/etc/kubernetes/ssl/ scp ca.crt ca.key kube-apiserver.crt kube-apiserver.key token.csv root@192.168.3.123:/etc/kubernetes/ssl/

Api-server配置文件(k8snode1-3)

1 mkdir -p /etc/kubernetes/cfg /var/log/kubernetes

更改 192.168.3.121 为当前master的ip

–tls-cert-file: API Server 自己的身份证(公钥)。当浏览器或 kubectl 访问 6443 端口时,它展示这个。

–tls-private-key-file: API Server 的私钥。用于加密 HTTPS 流量。

–client-ca-file: 根证书(CA)。API Server 用它来验证所有找上门来的客户端(如 kubectl)提供的证书是不是自己人签发的。这个配置是要和 cm 的 --cluster-signing-cert-file=/etc/kubernetes/ssl/ca.crt --cluster-signing-key-file=/etc/kubernetes/ssl/ca.key 保持一致,这样cm 给node 发cert的时候 ,ndoe 才能通过新发的cert 链接api,api 使用这个来验证

–kubelet-client-certificate: API Server 去找 Node 节点(kubelet)办事时展示的身份证。

–kubelet-client-key: API Server 访问 kubelet 用的私钥。

–etcd-cafile / certfile / keyfile: API Server 访问 Etcd 数据库时使用的三件套(CA、证书、私钥)。

–service-account-key-file: 验证专用锁。用来验证 Pod 里的 ServiceAccount Token。

–service-account-signing-key-file: 签署专用章。用来给新生成的 Token 盖章。

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 cat <<EOF > /etc/kubernetes/cfg/kube-apiserver.conf KUBE_APISERVER_OPTS="--v=2 \\ --log-dir=/var/log/kubernetes \\ --etcd-servers=https://etcd1:2379,https://etcd2:2379,https://etcd3:2379 \\ --bind-address=192.168.3.121 \\ --secure-port=6443 \\ --advertise-address=192.168.3.121 \\ --allow-privileged=true \\ --service-cluster-ip-range=10.96.0.0/16 \\ --enable-admission-plugins=NamespaceLifecycle,LimitRanger,ServiceAccount,ResourceQuota,NodeRestriction \\ --authorization-mode=RBAC,Node \\ --enable-bootstrap-token-auth=true \\ --token-auth-file=/etc/kubernetes/ssl/token.csv \\ --service-node-port-range=30000-32767 \\ --kubelet-client-certificate=/etc/kubernetes/ssl/kube-apiserver.crt \\ --kubelet-client-key=/etc/kubernetes/ssl/kube-apiserver.key \\ --tls-cert-file=/etc/kubernetes/ssl/kube-apiserver.crt \\ --tls-private-key-file=/etc/kubernetes/ssl/kube-apiserver.key \\ --client-ca-file=/etc/kubernetes/ssl/ca.crt \\ --service-account-key-file=/etc/kubernetes/ssl/ca.key \\ --service-account-signing-key-file=/etc/kubernetes/ssl/ca.key \\ --service-account-issuer=https://kubernetes.default.svc.cluster.local \\ --etcd-cafile=/etc/etcd/ssl/ca.crt \\ --etcd-certfile=/etc/etcd/ssl/etcd_server.crt \\ --etcd-keyfile=/etc/etcd/ssl/etcd_server.key \\ --audit-log-maxage=30 \\ --audit-log-maxbackup=3 \\ --audit-log-maxsize=100 \\ --audit-log-path=/var/log/kubernetes/proxy-audit.log" EOF

创建 systemd service(k8snode1-3)

/usr/lib/systemd/system/kube-apiserver.service:

1 2 3 4 5 6 7 8 9 10 11 12 13 14 [Unit] Description=Kubernetes API Server Documentation=https://github.com/kubernetes/kubernetes After=network.target etcd.service [Service] EnvironmentFile=/etc/kubernetes/cfg/kube-apiserver.conf ExecStart=/usr/local/bin/kube-apiserver $KUBE_APISERVER_OPTS Restart=on-failure LimitNOFILE=65536 [Install] WantedBy=multi-user.target

启动 kube-apiserver(k8snode1-3)

1 2 3 4 systemctl daemon-reload systemctl start kube-apiserver systemctl status kube-apiserver systemctl enable kube-apiserver

安装 Kube Controller Manager

Controller Manager 证书验证流程

kube-controller-manager.conf 里配了 --service-account-private-key-file=/…/ca.key,要和在 apiserver 里配了 --service-account-key-file=/…/ca.key。

场景:当一个新的 kubelet 加入集群时,它会发一个申请:“我想要一个证书,请给我签发。”

过程:API Server 收到申请后,kube-controller-manager 内部的 签名控制器 (CSR Signing Controller) 会跳出来工作。

动作:它会读取 --cluster-signing-cert-file 和 --cluster-signing-key-file。

结果:它用这两文件(也就是根证书的公章)给那个 kubelet 签发一张新的身份证。

当 kubelet 拿着通过 CSR 申请到的新证书(假设叫 kubelet.crt)去连接 API Server 时,API Server 会执行以下逻辑:

看公章:API Server 检查 kubelet.crt 上的数字签名。

找对照表:API Server 翻看自己的配置参数 --client-ca-file(在你的配置里,它指向的是 /etc/kubernetes/ssl/ca.crt)。

比对结果:API Server 发现 kubelet.crt 是由 ca.crt 对应的私钥(ca.key)签发的。

结论:验证通过!API Server 确认这个 kubelet 是“自己人”。

验证通过后,API Server 怎么知道它是谁?验证“合法性”只是第一步,API Server 还需要知道这个 kubelet 的权限。证书内容:CM 在签发 kubelet.crt 时,会根据 CSR 里的信息,在证书里写入:CN (Common Name): 比如 system:node:k8s-node1 O (Organization): 比如 system:nodes

Controller Manager 生成配置文件(k8snode1-3)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 cat <<EOF > /etc/kubernetes/cfg/kube-controller-manager.conf KUBE_CONTROLLER_MANAGER_OPTS="--v=2 \\ --bind-address=127.0.0.1 \\ --root-ca-file=/etc/kubernetes/ssl/ca.crt \\ --cluster-signing-cert-file=/etc/kubernetes/ssl/ca.crt \\ --cluster-signing-key-file=/etc/kubernetes/ssl/ca.key \\ --service-account-private-key-file=/etc/kubernetes/ssl/ca.key \\ --kubeconfig=/etc/kubernetes/cfg/kube-controller-manager.kubeconfig \\ --leader-elect=true \\ --use-service-account-credentials=true \\ --cluster-signing-duration=87600h \\ --cluster-cidr=10.244.0.0/16 \\ --service-cluster-ip-range=10.96.0.0/16 \\ --allocate-node-cidrs=true" EOF

Kube Controller Manager 生成证书(k8snode1)

设置集群参数 (指向 VIP) 逻辑:controller-manager 连接 https://k8smaster:6443 时,API Server 会抛出 kube-apiserver.crt。controller-manager 拿这个 ca.crt 一比对,发现是自己人签发的,才敢放心连接。

–certificate-authority (CA 证书) 的作用

它是 CM 用来验证“门”真伪的“参照物”。

场景描述:CM 准备访问 https://k8smaster:6443 。

发生什么:当 CM 连接时,AS 会向 CM 出示自己的身份证(即 kube-apiserver.crt)。

CA 的作用:CM 必须判断这个身份证是真的还是钓鱼网站假冒的。CM 会掏出配置文件里的 --certificate-authority(也就是 ca.crt),去比对 AS 那个身份证上的印章。

结论:如果印章对得上,CM 才敢发送数据。这里的 CA 证书是为了防止 CM 被“中间人攻击”。

–client-certificate (客户端证书) 的作用

它是 CM 用来证明自己身份的“身份证”。

场景描述:CM 验证完 AS 是真的后,AS 也会拦住 CM 问:“你是谁?你有权限进来吗?”。

发生什么:CM 掏出配置文件里的 --client-certificate(即 kube-apiserver.crt)递给 AS。

作用:AS 拿着这个证书,去比对自己的 --client-ca-file(也是 ca.crt)。

结论:AS 发现这是自家签发的证书,于是给 CM 开门。这里的客户端证书是为了证明 CM 是有权访问的“合法员工”。

标准做法:应该专门签发一个 kube-controller-manager.crt,其 CN(通用名称)设置为 system:kube-controller-manager,这样 AS 就能通过 CN 知道来者是 CM,并赋予它对应的权限。

证书生成 (k8snode1)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 openssl genrsa -out kube-controller-manager.key 2048 openssl req -new -key kube-controller-manager.key \ -out kube-controller-manager.csr \ -subj "/C=CN/ST=Beijing/L=Beijing/O=system:kube-controller-manager/CN=system:kube-controller-manager" cat <<EOF > cm-ext.cnf keyUsage = critical, digitalSignature, keyEncipherment extendedKeyUsage = clientAuth, serverAuth EOF openssl x509 -req -in kube-controller-manager.csr \ -CA ca.crt -CAkey ca.key -CAcreateserial \ -out kube-controller-manager.crt -days 3650 \ -extfile cm-ext.cnf

创建kube-controller-manager.kubeconfig (k8snode1)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 kubectl config set-cluster kubernetes \ --certificate-authority=/etc/kubernetes/ssl/ca.crt \ --embed-certs=true \ --server=https://k8smaster:9443 \ --kubeconfig=/etc/kubernetes/cfg/kube-controller-manager.kubeconfig kubectl config set-context default \ --cluster=kubernetes \ --user=system:kube-controller-manager \ --kubeconfig=/etc/kubernetes/cfg/kube-controller-manager.kubeconfig kubectl config set-credentials system:kube-controller-manager \ --client-certificate=kube-controller-manager.crt \ --client-key=kube-controller-manager.key \ --embed-certs=true \ --kubeconfig=/etc/kubernetes/cfg/kube-controller-manager.kubeconfig kubectl config use-context default --kubeconfig=/etc/kubernetes/cfg/kube-controller-manager.kubeconfig kubectl config current-context --kubeconfig=/etc/kubernetes/cfg/kube-controller-manager.kubeconfig kubectl config get-contexts --kubeconfig=/etc/kubernetes/cfg/kube-controller-manager.kubeconfig

配置文件 kube-controller-manager.kubeconfig 分发

这里不需要拷贝,因为上面的命令已经使用了 --embed-certs=true 参数,那么kube-controller-manager.kubeconfig 已经包含了签发的证书

1 2 3 4 scp /etc/kubernetes/cfg/kube-controller-manager.kubeconfig root@192.168.3.122:/etc/kubernetes/cfg/ scp /etc/kubernetes/cfg/kube-controller-manager.kubeconfig root@192.168.3.123:/etc/kubernetes/cfg/ kubectl config use-context default --kubeconfig=/etc/kubernetes/cfg/kube-controller-manager.kubeconfig

API-server 服务高可用

安装keepalived haproxy (k8snode1-3)

yum install -y keepalived haproxy

1 2 3 4 5 6 7 8 9 10 11 groupadd -r haproxy useradd -r -g haproxy -s /sbin/nologin haproxy mkdir -p /var/lib/haproxychown haproxy:haproxy /var/lib/haproxy

配置虚拟ip (k8snode1-3)

编辑文件:/etc/haproxy/haproxy.cfg

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 global log 127.0.0.1 local2 chroot /var/lib/haproxy pidfile /var/run/haproxy.pid maxconn 4096 user haproxy group haproxy daemon stats socket /var/lib/haproxy/stats stats socket /var/lib/haproxy/stats ssl-default-bind-ciphers PROFILE=SYSTEM ssl-default-server-ciphers PROFILE=SYSTEM defaults mode tcp log global option dontlognull retries 3 timeout http-request 10s timeout queue 1m timeout connect 10s timeout client 1m timeout server 1m timeout http-keep-alive 10s timeout check 10s maxconn 3000 frontend kube-apiserver bind *:9443 mode tcp option tcplog default_backend kube-apiserver backend kube-apiserver log 127.0.0.1 local3 err balance roundrobin server k8smaster1 192.168.3.121:6443 check inter 2000 fall 3 rise 2 server k8smaster2 192.168.3.122:6443 check inter 2000 fall 3 rise 2 server k8smaster3 192.168.3.123:6443 check inter 2000 fall 3 rise 2 listen stats bind *:8888 mode http stats auth admin:password stats refresh 5s stats uri /stats

启动haproxy(k8snode1-3)

1 2 3 4 5 6 7 8 9 10 11 12 13 haproxy -f /etc/haproxy/haproxy.cfg -c systemctl restart haproxy systemctl enable haproxy ps aux | grep haproxy netstat -lntp | grep -E "9443|8888"

配置 keepalived (k8snode1)

/etc/keepalived/keepalived.conf 在k8smaster node1 中配置,然后拷贝到其他机器上,注意每个机器的配置不一样 router_id state priority

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 global_defs { script_user root enable_script_security router_id k8smaster1 vrrp_skip_check_adv_addr vrrp_garp_interval 0 vrrp_gna_interval 0 } vrrp_script check_haproxy { script "/etc/keepalived/check_haproxy.sh" interval 3 weight -20 } vrrp_instance VI_1 { state MASTER interface ens160 virtual_router_id 51 priority 100 advert_int 1 authentication { auth_type PASS auth_pass 1111 } virtual_ipaddress { 192.168.3.120 } track_script { check_haproxy } }

定义 check_haproxy 脚本 (k8snode1)

1 2 3 4 5 6 7 cat <<EOF> /etc/keepalived/check_haproxy.sh #!/bin/bash # 检查是否存在 haproxy 进程,或者检查 9443 端口是否在监听 if ! ss -lnt | grep -q :9443; then exit 1 fi EOF

必须赋予执行权限 (k8snode1)

1 chmod +x /etc/keepalived/check_haproxy.sh

拷贝分发 (k8snode1)

1 scp /etc/keepalived/* root@192.168.3.122:/etc/keepalived/

请参考注释更改node1 node2 的配置文件k8snode2-3)

启动 keepalived.service (k8snode1-3)

1 2 3 4 systemctl restart keepalived.service systemctl enable keepalived.service ip addr show ens160 journalctl -u keepalived -f

验证VIP

1 2 3 4 5 6 7 8 9 10 11 ip a | grep 192.168.3.120 k8snode1 systemctl stop keepalived.service ip a | grep 192.168.3.120 systemctl stop keepalived.service ip a | grep 192.168.3.120

启动 Kube Controller Manager(k8snode1-3)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 cat <<EOF > /usr/lib/systemd/system/kube-controller-manager.service [Unit] Description=Kubernetes Controller Manager Documentation=https://github.com/kubernetes/kubernetes [Service] EnvironmentFile=/etc/kubernetes/cfg/kube-controller-manager.conf ExecStart=/usr/local/bin/kube-controller-manager \$KUBE_CONTROLLER_MANAGER_OPTS Restart=on-failure LimitNOFILE=65536 [Install] WantedBy=multi-user.target EOF

1 2 3 4 5 6 7 8 9 systemctl start kube-controller-manager systemctl restart kube-controller-manager systemctl status kube-controller-manager systemctl enable kube-controller-manager netstat -tpln | grep kube-controll journalctl -u kube-controller-manager -f

生成 Admin 身份的配置文件(赋予 kubectl 管理权限)(k8snode1)

生成Admin 证书(k8snode1)

1 2 3 4 5 6 7 openssl genrsa -out admin.key 2048 openssl req -new -key admin.key \ -out admin.csr \ -subj "/C=CN/ST=Beijing/L=Beijing/O=system:masters/CN=kubernetes"

生成Admin 证书cnf 文件(k8snode1)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 cat <<EOF > admin-k8s-cert.cnf [req] distinguished_name = req_distinguished_name prompt = no [req_distinguished_name] C = CN ST = Beijing L = Beijing O = system:masters CN = kubernetes [v3_req] keyUsage = critical, digitalSignature, keyEncipherment extendedKeyUsage = clientAuth, serverAuth subjectAltName = @alt_names [alt_names] # --- DNS 别名 --- DNS.1 = kubernetes DNS.2 = kubernetes.default DNS.3 = kubernetes.default.svc DNS.4 = kubernetes.default.svc.cluster.local DNS.5 = k8smaster DNS.6 = k8smaster1 DNS.7 = k8smaster2 DNS.8 = k8smaster3 # --- IP 地址 --- IP.1 = 127.0.0.1 IP.2 = 192.168.3.120 IP.3 = 192.168.3.121 IP.4 = 192.168.3.122 IP.5 = 192.168.3.123 # Cluster IP 网段的首地址 (用于集群内部 Pod 访问) IP.6 = 10.96.0.1 EOF openssl x509 -req -in admin.csr \ -CA ca.crt -CAkey ca.key -CAcreateserial \ -out admin.crt -days 3650 \ -extensions v3_req -extfile admin-k8s-cert.cnf mkdir -p ~/.kubekubectl config set-cluster kubernetes \ --certificate-authority=/etc/kubernetes/ssl/ca.crt \ --embed-certs=true \ --server=https://k8smaster:9443 \ --kubeconfig=/root/.kube/config kubectl config set-credentials kubernetes \ --client-certificate=admin.crt \ --client-key=admin.key \ --embed-certs=true \ --kubeconfig=/root/.kube/config kubectl config set-context kubernetes \ --cluster=kubernetes \ --user=kubernetes \ --kubeconfig=/root/.kube/config kubectl config use-context kubernetes --kubeconfig=/root/.kube/config kubectl config get-contexts --kubeconfig=/root/.kube/config scp /root/.kube/* root@192.168.3.122:/root/.kube/ scp /root/.kube/* root@192.168.3.123:/root/.kube/

检查 kube-controller-manager 和 kube-apiserver

1 2 3 4 5 6 [root@k8snode1 ~]# kubectl get lease -n kube-system NAME HOLDER AGE apiserver-ctizcdz57znnocnyti6ycgyu3i apiserver-ctizcdz57znnocnyti6ycgyu3i_e93d21da-311d-4e8f-9577-03407902e638 6d20h apiserver-k3qxrawih5rj2jvagowwwcgi54 apiserver-k3qxrawih5rj2jvagowwwcgi54_aea946bc-28ff-4df2-a673-f2f453f3c6a6 4d12h apiserver-rq3dcmitwparyow7yeum3nqx4i apiserver-rq3dcmitwparyow7yeum3nqx4i_d1ce8d65-f2e1-4277-9a51-d06d461b4d53 6d20h kube-controller-manager k8snode3_889ada37-db80-4dc5-a921-b799498babdf 52m

安装kube-proxy(k8snode1-3)

证书生成(k8snode1)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 openssl genrsa -out kube-proxy.key 2048 openssl req -new -key kube-proxy.key \ -out kube-proxy.csr \ -subj "/C=CN/ST=Beijing/L=Beijing/O=system:node-proxier/CN=system:kube-proxy" openssl x509 -req -in kube-proxy.csr \ -CA /etc/kubernetes/ssl/ca.crt \ -CAkey /etc/kubernetes/ssl/ca.key \ -CAcreateserial \ -out kube-proxy.crt -days 3650 \ -extensions v3_req -extfile admin-k8s-cert.cnf

kube-proxy.kubeconfig生成(k8snode1)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 kubectl config set-cluster kubernetes \ --certificate-authority=/etc/kubernetes/ssl/ca.crt \ --embed-certs=true \ --server=https://k8smaster:9443 \ --kubeconfig=/etc/kubernetes/cfg/kube-proxy.kubeconfig kubectl config set-credentials system:kube-proxy \ --client-certificate=kube-proxy.crt \ --client-key=kube-proxy.key \ --embed-certs=true \ --kubeconfig=/etc/kubernetes/cfg/kube-proxy.kubeconfig kubectl config set-context default \ --cluster=kubernetes \ --user=system:kube-proxy \ --kubeconfig=/etc/kubernetes/cfg/kube-proxy.kubeconfig kubectl config use-context default --kubeconfig=/etc/kubernetes/cfg/kube-proxy.kubeconfig

kube-proxy.kubeconfig分发 (k8snode1)

1 2 scp /etc/kubernetes/cfg/kube-proxy.kubeconfig root@192.168.3.122:/etc/kubernetes/cfg/ scp /etc/kubernetes/cfg/kube-proxy.kubeconfig root@192.168.3.123:/etc/kubernetes/cfg/

kube-proxy 服务文件 (k8snode1-3)

vim /usr/lib/systemd/system/kube-proxy.service

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 ----------- [Unit] Description=Kubernetes Proxy After=network.target [Service] ExecStart=/usr/local/bin/kube-proxy \ --config=/etc/kubernetes/cfg/kube-proxy-config.yml \ --v=2 Restart=on-failure RestartSec=5 LimitNOFILE=65536 [Install] WantedBy=multi-user.target ------

kube-proxy 配置yaml (k8snode1)

vim /etc/kubernetes/cfg/kube-proxy-config.yml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 kind: KubeProxyConfiguration apiVersion: kubeproxy.config.k8s.io/v1alpha1 bindAddress: 0.0.0.0 healthzBindAddress: 0.0.0.0:10256 metricsBindAddress: 0.0.0.0:10249 clientConnection: kubeconfig: /etc/kubernetes/cfg/kube-proxy.kubeconfig acceptContentTypes: "" contentType: application/vnd.kubernetes.protobuf qps: 5 burst: 10 hostnameOverride: k8snode1 clusterCIDR: 10.244.0.0/16 mode: "ipvs" ipvs: scheduler: "rr" syncPeriod: 30s minSyncPeriod: 0s tcpTimeout: 0s tcpFinTimeout: 0s udpTimeout: 0s iptables: masqueradeAll: false syncPeriod: 30s minSyncPeriod: 0s conntrack: maxPerCore: 32768 min: 131072

kube-proxy 配置yaml分发 (k8snode1)

1 2 scp /etc/kubernetes/cfg/kube-proxy-config.yml root@192.168.3.122:/etc/kubernetes/cfg/ scp /etc/kubernetes/cfg/kube-proxy-config.yml root@192.168.3.122:/etc/kubernetes/cfg/

修改其他两个node 的 配置文件 hostnameOverride 为node2 node3

kube-proxy 服务启动 (k8snode1-3)

1 2 3 4 systemctl start kube-proxy systemctl status kube-proxy systemctl enable kube-proxy journalctl -u kube-proxy -f

检查kube-proxy 服务状况 (k8snode1-3)

1 2 3 4 5 curl -s http://127.0.0.1:10256/healthz 预期返回: ok curl -s http://127.0.0.1:10249/metrics | head -n 10

检查proxy 是否生效

1 2 3 4 5 6 7 8 9 10 11 kubectl get componentstatuses

scheduler 部署步骤

scheduler 生成证书(k8snode1)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 scheduler-openssl.cnf: [req] req_extensions = v3_req distinguished_name = req_distinguished_name [req_distinguished_name] [ v3_req ] basicConstraints = CA:FALSE keyUsage = nonRepudiation, digitalSignature, keyEncipherment extendedKeyUsage = clientAuth, serverAuth openssl genrsa -out kube-scheduler.key 2048 openssl req -new -key kube-scheduler.key \ -config scheduler-openssl.cnf \ -subj "/C=CN/ST=Beijing/L=Beijing/O=system:system:kube-scheduler/CN=system:kube-scheduler" \ -out kube-scheduler.csr openssl x509 -req -in kube-scheduler.csr \ -CA ca.crt -CAkey ca.key -CAcreateserial \ -out kube-scheduler.crt -days 3650 \ -extensions v3_req -extfile scheduler-openssl.cnf

kube-scheduler.kubeconfig 参数生成 (k8snode1)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 kubectl config set-cluster kubernetes \ --certificate-authority=ca.crt \ --embed-certs=true \ --server=https://k8smaster:9443 \ --kubeconfig=/etc/kubernetes/cfg/kube-scheduler.kubeconfig kubectl config set-credentials system:kube-scheduler \ --client-certificate=kube-scheduler.crt \ --client-key=kube-scheduler.key \ --embed-certs=true \ --kubeconfig=/etc/kubernetes/cfg/kube-scheduler.kubeconfig kubectl config set-context default \ --cluster=kubernetes \ --user=system:kube-scheduler \ --kubeconfig=/etc/kubernetes/cfg/kube-scheduler.kubeconfig kubectl config use-context default --kubeconfig=/etc/kubernetes/cfg/kube-scheduler.kubeconfig

kube-scheduler.yml 和kube-scheduler.conf 生成(k8snode1)

/etc/kubernetes/cfg/kube-scheduler.yml

1 2 3 4 5 6 7 8 9 10 11 12 13 apiVersion: kubescheduler.config.k8s.io/v1 kind: KubeSchedulerConfiguration clientConnection: kubeconfig: /etc/kubernetes/cfg/kube-scheduler.kubeconfig acceptContentTypes: "application/vnd.kubernetes.protobuf" contentType: "application/vnd.kubernetes.protobuf" qps: 50 burst: 100 leaderElection: leaderElect: true resourceNamespace: "kube-system" resourceName: "kube-scheduler"

1 2 3 4 cat > /etc/kubernetes/cfg/kube-scheduler.conf <<EOF KUBE_SCHEDULER_OPTS="--config=/etc/kubernetes/cfg/kube-scheduler.yml \\ --v=2" EOF

kube-scheduler.yml 拷贝分发(k8snode1)

1 2 3 scp /etc/kubernetes/cfg/kube-scheduler.kubeconfig /etc/kubernetes/cfg/kube-scheduler.yml /etc/kubernetes/cfg/kube-scheduler.conf root@192.168.3.122:/etc/kubernetes/cfg/ scp /etc/kubernetes/cfg/kube-scheduler.kubeconfig /etc/kubernetes/cfg/kube-scheduler.yml /etc/kubernetes/cfg/kube-scheduler.conf root@192.168.3.123:/etc/kubernetes/cfg/

kube-scheduler 启动 (k8snode1-3)

/usr/lib/systemd/system/kube-scheduler.service

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 [Unit] Description=Kubernetes Scheduler Documentation=https://github.com/kubernetes/kubernetes After=network.target [Service] EnvironmentFile=/etc/kubernetes/cfg/kube-scheduler.conf ExecStart=/usr/local/bin/kube-scheduler $KUBE_SCHEDULER_OPTS Restart=on-failure RestartSec=5 LimitNOFILE=65536 [Install] WantedBy=multi-user.target 1. 重新加载 Systemd 配置 systemctl daemon-reload systemctl enable kube-scheduler systemctl start kube-scheduler systemctl status kube-scheduler journalctl -u kube-scheduler -f

check kube-scheduler (k8snode1-3)

1 2 3 4 5 6 7 8 9 10 [root@k8snode1 ~]# kubectl get cs Warning: v1 ComponentStatus is deprecated in v1.19+ NAME STATUS MESSAGE ERROR scheduler Healthy ok controller-manager Healthy ok etcd-0 Healthy ok root@k8snode1 ~]# kubectl get lease -n kube-system kube-scheduler NAME HOLDER AGE kube-scheduler k8snode3_bc33d48f-4a2f-4f66-9815-bf552df697eb 98s

安装kubet

kubelet.conf 文件生成 (k8snode1-3)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 cat > /etc/kubernetes/cfg/kubelet.conf <<EOF KUBELET_OPTS="--v=2 \\ --kubeconfig=/etc/kubernetes/cfg/kubelet.kubeconfig \\ --bootstrap-kubeconfig=/etc/kubernetes/cfg/bootstrap.kubeconfig \\ --config=/etc/kubernetes/cfg/kubelet-config.yml \\ --cert-dir=/etc/kubernetes/certs EOF /usr/lib/systemd/system/kubelet.service [Unit] Description=Kubernetes Kubelet After=containerd.service Requires=containerd.service [Service] EnvironmentFile=/etc/kubernetes/cfg/kubelet.conf ExecStart=/usr/local/bin/kubelet $KUBELET_OPTS Restart=on-failure KillMode=process [Install] WantedBy=multi-user.target

kubelet.config 文件生成 (k8snode1-3)

vim /etc/kubernetes/cfg/kubelet-config.yml

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 kind: KubeletConfiguration apiVersion: kubelet.config.k8s.io/v1beta1 address: 0.0.0.0 port: 10250 readOnlyPort: 0 cgroupDriver: systemd clusterDNS: - 10.96.0.2 clusterDomain: cluster.local failSwapOn: false authentication: anonymous: enabled: false webhook: enabled: true x509: clientCAFile: /etc/kubernetes/ssl/ca.crt authorization: mode: Webhook

bootstrap.kubeconfig 文件生成 (k8snode1)

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 kubectl config set-cluster kubernetes \ --certificate-authority=/etc/kubernetes/ssl/ca.crt \ --embed-certs=true \ --server=https://k8smaster:9443 \ --kubeconfig=/etc/kubernetes/cfg/bootstrap.kubeconfig kubectl config set-credentials "kubelet-bootstrap" \ --token=c1aa5cb23fee29d424e56855884dacb9 \ --kubeconfig=/etc/kubernetes/cfg/bootstrap.kubeconfig kubectl config set-context default \ --cluster=kubernetes \ --user="kubelet-bootstrap" \ --kubeconfig=/etc/kubernetes/cfg/bootstrap.kubeconfig kubectl config use-context default --kubeconfig=/etc/kubernetes/cfg/bootstrap.kubeconfig

bootstrap.kubeconfig 文件分发 (k8snode1)

1 2 3 4 5 scp /etc/kubernetes/cfg/bootstrap.kubeconfig root@192.168.3.122:/etc/kubernetes/cfg/ scp /etc/kubernetes/cfg/bootstrap.kubeconfig root@192.168.3.123:/etc/kubernetes/cfg/ kubectl config use-context default --kubeconfig=/etc/kubernetes/cfg/bootstrap.kubeconfig

kubelet 服务启动(k8snode1-3)

1 2 3 systemctl start kubelet systemctl status kubelet systemctl enable kubelet

kubelet 在master上授权(k8snode1)

1 2 3 kubectl create clusterrolebinding kubelet-bootstrap \ --clusterrole=system:node-bootstrapper \ --user=kubelet-bootstrap

Master 授权 kubelet(k8smaser1(k8snode1))

1 2 3 4 kubectl get csr kubectl certificate approve <CSR名称> kubectl get nodes

授权kubernetes kubelet api 的所有权限

1 2 3 4 kubectl create clusterrolebinding kubelet-api-admin \ --clusterrole=system:kubelet-api-admin \ --user=kubernetes

K8S 隔离master集群不分配 Pods

1 2 kubectl taint nodes k8snode1 k8snode2 k8snode3 node-role.kubernetes.io/master=:NoSchedule

K8S 集群增加新的work节点

安装所需命令

1 2 3 4 scp /usr/local/bin/kubelet /usr/local/bin/kube-proxy k8snode4:/usr/local/bin/ scp -r /etc/kubernetes k8snode4:/etc/ scp /usr/lib/systemd/system/kubelet.service /usr/lib/systemd/system/kube-proxy.service k8snode4:/usr/lib/systemd/system/

修改配置文件

修改kube-proxy-config.yml hostnameOverride 为对应的hostname

启动服务

1 2 3 4 5 6 7 systemctl start kube-proxy systemctl status kube-proxy systemctl enable kube-proxy systemctl start kubelet systemctl status kubelet systemctl enable kubelet

打上标签

#master 上标签 kubectl label nodes k8snode4 k8snode5 node-role.kubernetes.io/worker=

Check K8S 集群

1 2 3 4 kubectl get nodes kubectl get --raw='/healthz?verbose' journalctl -u kubelet -f journalctl -u kube-proxy -f

安装网络 CNI 插件

1 2 3 4 5 6 7 8 9 10 11 curl https://calico-v3-25.netlify.app/archive/v3.25/manifests/calico.yaml -O sed -i 's/192.168.0.0\/16/10.244.0.0\/16/g' calico.yaml sed -i 's/# - name: CALICO_IPV4POOL_CIDR/- name: CALICO_IPV4POOL_CIDR/g' calico.yaml sed -i 's/# value: "10.244.0.0\/16"/ value: "10.244.0.0\/16"/g' calico.yaml kubectl apply -f calico.yam

check 一下 都已经ready

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 [root@k8snode1 k8sresource]# kubectl get nodes NAME STATUS ROLES AGE VERSION k8snode1 Ready control-plane,master 41h v1.36.0 k8snode2 Ready control-plane,master 41h v1.36.0 k8snode3 Ready control-plane,master 41h v1.36.0 k8snode4 Ready worker 40h v1.36.0 k8snode5 Ready worker 40h v1.36.0 [root@k8snode1 k8sresource]# kubectl get pods -n kube-system | grep calico calico-kube-controllers-c57dfffd6-9sbjs 1/1 Running 0 77s calico-node-52fnv 1/1 Running 0 77s calico-node-5ndpq 1/1 Running 0 77s calico-node-77bst 1/1 Running 0 77s calico-node-8wpgl 1/1 Running 0 77s calico-node-bgk6r 1/1 Running 0 77s

安装dns服务

将三个codeDNS 安装 master上,一下设置了节点情和性和clusterIP

编辑 coredns.yaml 文件,

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 131 132 133 134 135 136 137 138 139 140 141 142 143 144 145 146 147 148 149 150 151 152 153 154 155 156 157 158 159 160 161 162 163 164 165 166 167 168 169 170 171 172 173 174 175 176 177 178 179 180 181 182 183 184 185 186 187 188 189 190 191 192 193 cat > coredns.yaml << 'EOF' --- apiVersion: v1 kind: ServiceAccount metadata: name: coredns namespace: kube-system --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRole metadata: name: system:coredns rules: - apiGroups: ["" ] resources: [endpoints, services, pods, namespaces] verbs: [list, watch] - apiGroups: ["" ] resources: [nodes] verbs: [get] - apiGroups: [discovery.k8s.io] resources: [endpointslices] verbs: [list, watch] --- apiVersion: rbac.authorization.k8s.io/v1 kind: ClusterRoleBinding metadata: name: system:coredns roleRef: apiGroup: rbac.authorization.k8s.io kind: ClusterRole name: system:coredns subjects: - kind: ServiceAccount name: coredns namespace: kube-system --- apiVersion: v1 kind: ConfigMap metadata: name: coredns namespace: kube-system data: Corefile: | .:53 { errors health { lameduck 5s } ready kubernetes cluster.local in-addr.arpa ip6.arpa { pods insecure fallthrough in-addr.arpa ip6.arpa ttl 30 } prometheus :9153 forward . 192.168.3.1 114.114.114.114 { max_concurrent 1000 } cache 30 loop reload loadbalance } --- apiVersion: apps/v1 kind: Deployment metadata: name: coredns namespace: kube-system labels: k8s-app: kube-dns spec: replicas: 3 strategy: type : RollingUpdate rollingUpdate: maxUnavailable: 1 selector: matchLabels: k8s-app: kube-dns template: metadata: labels: k8s-app: kube-dns spec: serviceAccountName: coredns tolerations: - key: CriticalAddonsOnly operator: Exists - key: node-role.kubernetes.io/control-plane effect: NoSchedule - key: node-role.kubernetes.io/master effect: NoSchedule affinity: nodeAffinity: requiredDuringSchedulingIgnoredDuringExecution: nodeSelectorTerms: - matchExpressions: - key: node-role.kubernetes.io/control-plane operator: Exists podAntiAffinity: requiredDuringSchedulingIgnoredDuringExecution: - labelSelector: matchExpressions: - key: k8s-app operator: In values: [kube-dns] topologyKey: kubernetes.io/hostname containers: - name: coredns image: registry.k8s.io/coredns/coredns:v1.12.0 imagePullPolicy: IfNotPresent resources: limits: memory: 170Mi requests: cpu: 100m memory: 70Mi args: ["-conf" , "/etc/coredns/Corefile" ] volumeMounts: - name: config-volume mountPath: /etc/coredns readOnly: true ports: - containerPort: 53 name: dns protocol: UDP - containerPort: 53 name: dns-tcp protocol: TCP - containerPort: 9153 name: metrics protocol: TCP livenessProbe: httpGet: path: /health port: 8080 scheme: HTTP initialDelaySeconds: 60 periodSeconds: 10 timeoutSeconds: 1 successThreshold: 1 failureThreshold: 5 readinessProbe: httpGet: path: /ready port: 8181 scheme: HTTP periodSeconds: 2 timeoutSeconds: 1 successThreshold: 1 failureThreshold: 3 securityContext: allowPrivilegeEscalation: false capabilities: add: [NET_BIND_SERVICE] drop: [ALL] readOnlyRootFilesystem: true volumes: - name: config-volume configMap: name: coredns items: - key: Corefile path: Corefile --- apiVersion: v1 kind: Service metadata: name: kube-dns namespace: kube-system labels: k8s-app: kube-dns kubernetes.io/name: CoreDNS kubernetes.io/cluster-service: "true" annotations: prometheus.io/scrape: "true" prometheus.io/port: "9153" spec: selector: k8s-app: kube-dns clusterIP: 10.96.0.2 ports: - name: dns port: 53 protocol: UDP - name: dns-tcp port: 53 protocol: TCP - name: metrics port: 9153 protocol: TCP EOF

安装coredns

1 2 3 4 5 6 7 8 kubectl apply -f coredns.yaml kubectl get pods -n kube-system -l k8s-app=kube-dns -o wide kubectl get configmap coredns -n kube-system -o yaml | grep forward kubectl run test-dns --image=busybox:1.28 --rm -it --restart=Never -- nslookup kubernetes.default

第三部分,删除k8s

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 systemctl stop kubelet systemctl stop containerd kubeadm reset -f rm -rf /etc/kubernetesrm -rf /var/lib/kubeletrm -rf /var/lib/etcdsudo rm -rf /var/run/kubernetes ~/.kubesudo rm -rf /usr/bin/kubeadm /usr/bin/kubectl /usr/bin/kubeletsudo systemctl daemon-reloadsudo systemctl reset-failedrm -rf /etc/cni/net.drm -rf /var/lib/cniiptables -F iptables -t nat -F iptables -t mangle -F iptables -X nft flush ruleset ctr -n k8s.io containers ls ctr -n k8s.io images ls ctr -n k8s.io containers rm -f $(ctr -n k8s.io containers ls -q) ctr -n k8s.io images rm $(ctr -n k8s.io images ls -q) ip netns list for ns in $(ip netns list | awk '{print $1}' ); do sudo ip netns delete $ns done ip link delete cali149f5445c04 sudo ip link set tunl0 downsudo ip link delete tunl0ip link delete cni0 ip link delete flannel.1 ip link delete calico0 lsmod | grep ipip sudo modprobe -r ipipdnf list installed | grep -E 'kubelet|kubeadm|kubectl|kubernetes' yum remove -y kubelet kubeadm kubectl dnf clean all sudo crictl rmi --allsudo du -sh /var/lib/containerdfor mount in $(mount | grep containerd | cut -d ' ' -f 3); do sudo umount -l $mount done sudo rm -rf /var/lib/containerd/*sudo rm -rf /var/run/containerd/*sudo systemctl start containerdreboot ps -ef | grep kube crictl ps -a ip a | grep cni

参考